On Decomposability in Robot Reinforcement Learning

© S. Höfer

© S. Höfer

Sebastian Höfer

Titel: On Decomposability in Robot Reinforcement Learning

Zusammenfassung:

Verstärkungslernen ist ein rechnerischer Rahmen, der es Maschinen ermöglicht, aus der Interaktion mit der Umwelt durch Versuch und Irrtum zu lernen. In den letzten Jahren wurde das verstärkende Lernen erfolgreich auf eine Vielzahl von Problembereichen angewendet, darunter auch die Robotik. Der Erfolg von Anwendungen des Verstärkungslernens in der Robotik hängt jedoch von einer Reihe von Annahmen ab, wie z. B. der Verfügbarkeit großer Mengen von Trainingsdaten, hochpräzisen Modellen des Roboters und der Umgebung sowie Vorkenntnissen über die Aufgabe.

In dieser Arbeit untersuchen wir mehrere dieser Annahmen und untersuchen, wie sie verallgemeinert werden können. Zu diesem Zweck betrachten wir diese Annahmen aus verschiedenen Blickwinkeln. Einerseits untersuchen wir sie in zwei konkreten Anwendungen des Verstärkungslernens in der Robotik: Ballfangen und Lernen, gelenkige Objekte zu manipulieren. Andererseits entwickeln wir einen abstrakten Erklärungsrahmen, der die Annahmen mit der Zerlegbarkeit von Problemen und Lösungen in Beziehung setzt. Zusammengenommen ermöglichen uns die konkreten Fallstudien und der abstrakte Erklärungsrahmen, Vorschläge zu machen, wie die zuvor genannten Annahmen gelockert werden können und wie man effektivere Lösungen für das Problem des verstärkten Lernens von Robotern entwickeln kann.

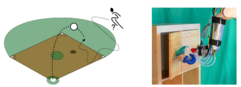

Die erste Fallstudie befasst sich mit dem Problem des Ballfangens: Wie läuft man am effektivsten, um ein Projektil, z. B. einen Baseball, zu fangen, der lange Zeit in der Luft fliegt. Die Frage nach der besten Lösung für das Ballfangproblem ist seit fast 50 Jahren Gegenstand intensiver wissenschaftlicher Debatten. Es hat sich herausgestellt, dass sich diese wissenschaftliche Debatte nicht nur auf das Ballfangproblem konzentriert, sondern um die Forschungsfrage kreist, ob heuristische oder optimierungsbasierte Ansätze für die Lösung solcher Probleme im Allgemeinen besser geeignet sind. In dieser Arbeit untersuchen wir das Ballfangproblem als eine Instanz der Heuristik- vs. Optimierungsdebatte. Wir untersuchen zwei Arten von Ansätzen für das Ballfangproblem, einen, der gemeinhin als heuristisch angesehen wird, und einen, der auf Optimierung basiert, und untersuchen ihre Eigenschaften anhand einer theoretischen Analyse und einer Reihe von Simulationsexperimenten. Diese Untersuchung zeigt, dass keine der beiden Arten von Ansätzen im Hinblick auf das Ballfangproblem als überlegen angesehen werden kann, da jeder von ihnen unterschiedliche Annahmen trifft und daher für verschiedene Varianten des Problems besser geeignet ist. Dieses Ergebnis wirft die Frage nach dem Hauptunterschied zwischen diesen beiden Arten von Ansätzen zum Ballfangen auf. Wir zeigen, dass Optimalität kein relevantes Unterscheidungskriterium ist: Wir zeigen, dass der Ansatz zum Ballfangen, der gemeinhin als heuristisch angesehen wird, unter aufgabenübergreifenden Annahmen als optimal bezeichnet werden kann. Dies motiviert uns, nach einem angemesseneren Erklärungsrahmen für die Unterscheidung zwischen diesen Lösungen zu suchen, und wir erörtern am Ende der Arbeit, ob die Dekomposition einen solchen Rahmen bietet.

Die zweite Studie befasst sich mit dem Problem des Erlernens des Umgangs mit gelenkigen Objekten. Gelenkige Objekte bestehen aus starren Körpern, die durch Gelenke verbunden sind, wie z. B. Türen, Laptops und Schubladen. In dieser Arbeit befassen wir uns mit den Fragen, wie die kinematische Struktur unbekannter gelenkiger Objekte erkannt werden kann, wie einfache Druck- und Zugbewegungen zur Betätigung der erkannten Gelenke erlernt werden können und wie die funktionalen Abhängigkeiten zwischen den Gelenken, z. B. Verriegelungsmechanismen, erkannt werden können. Die Lösungen für diese Fragen erfordern Schlussfolgerungen über Objektteile und ihre Beziehungen. Wir greifen daher auf ein Lernparadigma zurück, das für solche Schlussfolgerungen gut geeignet ist: das relationale Verstärkungslernen. Um das relationale Lernen eng mit den perzeptuellen und motorischen Fähigkeiten zu verknüpfen, die für die Bedienung der manipulierten Objekte erforderlich sind, schlagen wir zwei neuartige Lernansätze vor: aufgabensensitives Lernen von relationalen Vorwärtsmodellen und einen Ansatz für die enge Kopplung von relationalem Vorwärtsmodell und Aktionsparameterlernen. Wir demonstrieren die Wirksamkeit dieser Ansätze in simulierten und realen Robotermanipulationsexperimenten.

Im letzten Teil dieser Arbeit verallgemeinern wir die aus den beiden Fallstudien gezogenen Lehren auf das Reinforcement Learning von Robotern und auf Probleme der Entscheidungsfindung. Zu diesem Zweck führen wir das Spektrum der Zersetzbarkeit als Erklärungsrahmen für die Charakterisierung von Problemen und Lösungen bei der Entscheidungsfindung ein. Dieser Rahmen betrachtet die Zerlegbarkeit als eine variierende Eigenschaft auf einem Spektrum und legt nahe, dass die Zerlegung eines Problems einen signifikanten Einfluss auf die Fähigkeit hat, eine angemessene Lösung zu finden. Daraus schließen wir, dass die Unfähigkeit, eine effektive Lösung zu finden, entweder aus einer verfrühten, unzureichenden Zerlegung des Problems resultieren kann oder aus der Annäherung an ein nicht zerlegbares Problem durch dessen vollständige Zerlegung. Um unsere Ansicht zu untermauern, betrachten wir die beiden Fallstudien erneut im Lichte der Zerlegbarkeit und liefern zusätzliche Belege aus der Literatur der künstlichen Intelligenz, der Kognitionswissenschaft und der Neurowissenschaft. Wir schließen diese Arbeit mit Vorschlägen, wie die Annahmen, die für eine erfolgreiche Anwendung von Reinforcement Learning in der Robotik erforderlich sind, angegangen werden können.

Juni 2017