State Representation Learning

Project description

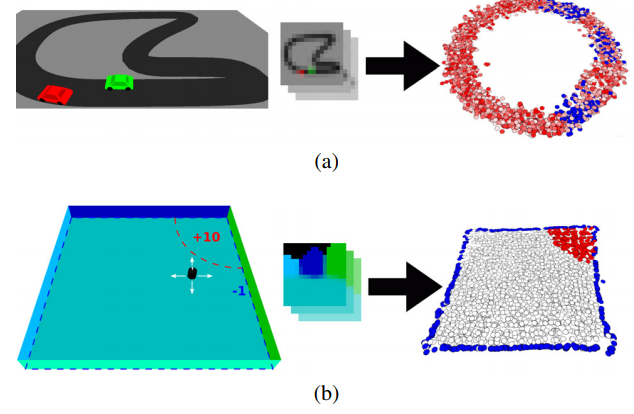

We want to enable robots to learn a broad range of tasks. Learning means generalizing knowledge from experienced situations to new situations. But in order to do so, the robots must already know what makes situations similar or different with respect to their current task. They need to be able to extract the right information from their sensory input that characterizes these situations. This information is what we call "state".

The information that should be included in the state differs depending on the task. For driving a car, for example, the state representation of the environment must include the road, other cars, traffic lights and so on. For cooking dinner in a kitchen, it must focus on completely different aspects of the environment.

Instead of relying on human defined perception (mapping from observations to the current state) for a specific task, robots must be able to autonomously learn which patterns in their sensory input are important. We think that the can learn this by interacting with the world: performing actions, observing how the sensory input changes and which situations are rewarding. From such experience, robots can learn task-specific state representations by making them consistent with prior knowledge about the physical world, e.g. that changes in the world are proportional to the magnitude of the actions of the robot, or that the state and the action together determine the reward.

Action and Forward Model Learning

Project description

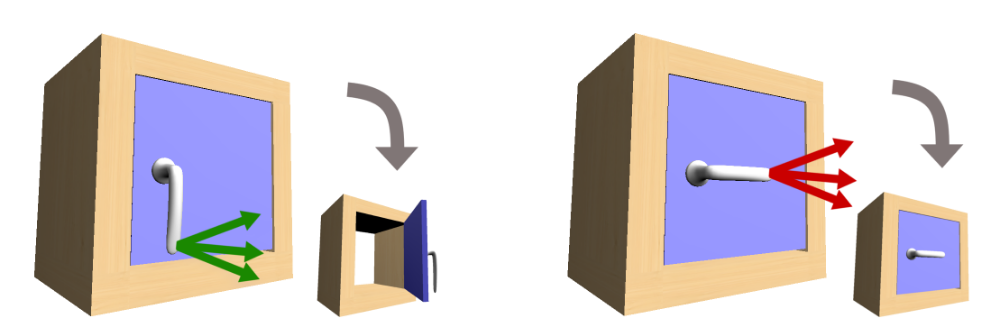

In order to learn suitable state representations, the robot requires a set of task-relevant actions, and must know how to execute them. But we can also look at the orthogonal problem: how can the robot learn suitable actions?

In our work, we study how to use knowledge about the state to learn better actions. This motivates our approach coupled action parameter and effect learning (CAPEL): we jointly learn the parametrizations of actions and a forward model for each action. These forward models predict the effects of each action, given the state of the world, and allow the robot to select the right action for a task.

Why do we try to solve these two complex learning problem together? We argue that they are tightly coupled: given a forward model, the model is only valid if the underlying action parametrization reliably evokes the effects the model predicts. Conversely, an action is only relevant if the robot can predict its effects with high certainty. Thus, the two learning problems are intrinsically coupled and should be solved jointly.

Learning with Side Information

Project description

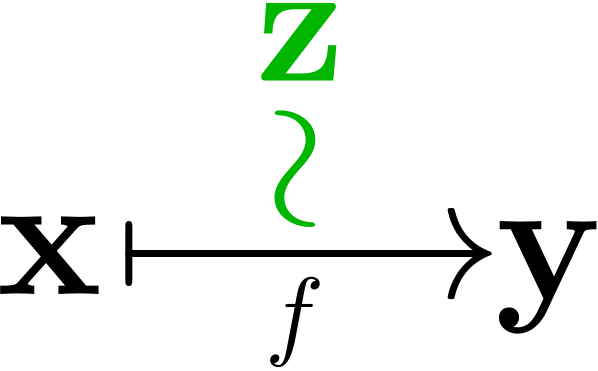

These approaches for learning state and action representations all follow a common theme: they exploit information that is relevant for the task, but that is not input or output of the function that is learned (e.g., the actions are used to learn a mapping from observation to states, but they are not required for estimating the state). This kind of information is termed side information.

Our work shows that learning with side information subsumes a variety of related approaches, e.g. multi-task learning, multi-view learning and learning using privileged information. This provides us with (i) a new perspective that connects these previously isolated approaches, (ii) insights about how these methods incorporate different types of prior knowledge, and hence implement different patterns, (iii) facilitating the application of these methods in novel tasks.

We have made our code for learning with side information publicly available: github.com/tu-rbo/concarne

Funding

Robotics-Specific Machine Learning (R-ML) funded by Deutsche Forschungsgemeinschaft (DFG), award number: 329426068, April 2017 - April 2020

Alexander von Humboldt professorship - awarded by the Alexander von Humboldt foundation and funded through the Ministry of Education and Research, BMBF,

July 2009 - June 2014

Publications

2018

Differentiable Particle Filters: End-to-End Learning with Algorithmic Priors

Proceedings of Robotics: Science and Systems

2018

2016

End-To-End Learnable Histogram Filters

Workshop on Deep Learning for Action and Interaction at NIPS,

December 2016

Unsupervised Learning of State Representations for Multiple Tasks

Workshop on Deep Learning for Action and Interaction at NIPS,

December 2016

Coupled Learning of Action Parameters and Forward Models for Manipulation

In IEEE, Editor, IEEE/RSJ International Conference on Intelligent Robots and Systems, Page 3893-3899

In IEEE, Editor

October 2016

Patterns for Learning with Side Information

February 2016

© RBO

© RBO

© RBO

© RBO

© RBO

© RBO